Graeme Blair

Professor of Political Science

UCLA

Co-director, Deportation Data Project

Bio Student appointments

I study state violence and how to make social science more credible, ethical, and useful.

Policing, violence, and the state

Book

Crime, insecurity, and community policing: Experiments on building trust. Cambridge University Press, Studies in Comparative Politics. 2024. With Fotini Christia and Jeremy M. Weinstein (eds.).

With Eric Arias, Emile Badran, Robert A. Blair, Ali Cheema, Thiemo Fetzer, Guy Grossman, Dotan Haim, Rebecca Hanson, Ali Hasanain, Ben Kachero, Dorothy Kronick, Benjamin Morse, Robert Muggah, Matthew Nanes, Tara Slough, Nico Ravanilla, Jacob N. Shapiro, Barbara Silva, Pedro C. L. Souza, Lily Tsai, and Anna Wilke.

How can societies effectively reduce crime without exacerbating adversarial relationships between the police and citizens? In recent decades, perhaps the most celebrated innovation in police reform has been the introduction of community policing, where citizens are involved in building channels of dialogue and improving police-citizen collaboration. Despite the widespread adoption of community policing in the United States and increasingly in the developing world, there is still limited credible evidence about whether it realistically increases trust in the police or reduces crime. Through simultaneously coordinated field experiments in a diversity of political contexts, this book presents the outcome of a major research initiative into the efficacy of community policing. Scholars from around the world uncover whether, and under what conditions, this highly influential strategy for tackling crime and insecurity is effective. With its highly innovative approach to cumulative learning, this project represents a new frontier in the study of police reform.

Articles

“Immigration and Customs Enforcement individual-level administrative data: An introduction.” With David Hausman and Phil Neff. Forthcoming, Nature Scientific Data.

This dataset, based on records obtained by Freedom of Information Act request, tracks United States Immigration and Customs Enforcement (ICE) individual enforcement actions from September 2023 through July 2025. The dataset includes five tables, linked by anonymized individual identifiers, tracking ICE encounters, detainer requests, arrests, detentions, and removals. The dataset creates new opportunities for descriptive and causal research on recent changes in immigration enforcement policy in the United States.

“The point of attack: Where and why does oil cause armed conflict in Africa?” Accepted, Journal of Politics. With Darin Christensen and Michael Gibilisco.

Prominent theories of conflict argue that belligerents fight to capture valuable rents (“prizes”) such as oil and other resources. Yet, we show that armed groups rarely attack sites with the most oil above or below ground, oil terminals and wells; only pipelines lead to conflict. To explain this finding, we integrate crisis bargaining and Blotto games. In our model, armed groups attack to steal oil and signal strength; anticipating the government’s defenses, these groups rarely target the biggest prizes, which are more fortified. Consistent with our model, we show that armed groups strategically randomize where they attack pipelines and that local and export prices for fuel have different effects on violence, because only export prices affect the government’s willingness to buy off would-be attackers. We revamp the prize logic for conflict to generate more accurate predictions and, in so doing, provide a rare real-world validation of a Blotto model.

“Accessing justice for survivors of violence against women.” Science, 2022. With Nirvikar Jassal.

In an effort to increase women's access to justice for crimes of violence against them, governments globally have implemented reforms including gender quotas in police hiring, women-run counseling centers, and mandates for women officers to exclusively handle cases of gender-based violence. The paper discusses the outcomes of such reforms, using a study conducted in Madhya Pradesh, India, as an example. This study introduced "women's help desks" in police stations, spaces designated for women officers to interact with women complainants. The results were mixed: while more incidents were reported and some police attitudes shifted, the reforms didn't significantly increase crime reporting by women or the arrest rates. The paper highlights the spectrum of gender-based reforms from integration (mixing women and men officers) to separation (exclusive spaces or stations for women), noting mixed results in effectiveness. It suggests that while certain reforms may increase report filings, they may not always result in arrests or change in police action, highlighting the complexity of addressing violence against women within the justice system.

“How does armed conflict shape investment? Evidence from the mining sector.” Journal of Politics, 2022. With Darin Christensen and Valerie Wirtschafter.

How does conflict affect firms' investment decisions? Past results are mixed: a third of studies we reviewed report null or mixed correlations; some suggest conflict increases investment. We rationalize these results, arguing that armed conflict has divergent effects depending on firms’ exposure to violence. Conflict can deter investment by disrupting production or raising uncertainty. Yet, conflict can encourage investment by hampering government oversight. We argue each mechanism operates over different geographic extents. We use data from the mining sector to test these claims and report three main results. Firms operating at conflict sites dramatically reduce investments. By contrast, firms operating in territory surrounding conflict, but at a remove from fighting, actually increase investment. Firms far from violence see a small negative effect. These divergent responses cannot be inferred from aggregate flows: we show conflict depresses aggregate investment, but this reflects responses among firms far from fighting.

“Community policing does not build citizen trust in police or reduce crime in the Global South.” Science, 2021. Lead author with Jeremy M. Weinstein, Fotini Christia, & ...

Eric Arias, Emile Badran, Robert A. Blair, Ali Cheema, Thiemo Fetzer, Guy Grossman, Dotan Haim, Rebecca Hanson, Ali Hasanain, Ben Kachero, Dorothy Kronick, Benjamin Morse, Robert Muggah, Matthew Nanes, Tara Slough, Nico Ravanilla, Jacob N. Shapiro, Barbara Silva, Pedro C. L. Souza, Lily Tsai, and Anna Wilke.

PDFCiteProjectReplicationPreanalysis planAppendices

Honorable Mention, APSA Comparative Politics Section Luebbert Best Article Prize

Commentary: “Community policing in the developing world.” By Santiago Tobón. Science.

Is it possible to reduce crime without exacerbating adversarial relationships between police and citizens? Community policing is a celebrated reform with that aim, now adopted on every continent. Yet, the evidence base is limited, studying reform components in isolation in a limited set of countries, and largely silent on citizen-police trust. We designed six field experiments with Global South police agencies to study locally-designed models of community policing, with coordinated measures of crime and the attitudes and behaviors of citizens and police. In a preregistered meta-analysis, we find that these interventions led to mixed implementation, largely failed to improve citizen-police relations, and do not reduce crime. Structural changes may be required for incremental police reforms such as community policing to succeed.

“Trusted authorities can change minds and shift norms during conflict.” Proceedings of the National Academy of Sciences, 2021. With Rebecca Littman, Elizabeth Nugent, & ...

Mohammed Bukar, Benjamin Crisman, Anthony Etim, Chad Hazlett, and Jiyoung Kim.

PDFCitePolicy briefAppendicesPreanalysis planReplication

The reintegration of former members of violent extremist groups is a pressing policy challenge. Governments and policymakers often have to change minds among reticent populations and shift perceived community norms in order to pave the way for peaceful reintegration. How can they do so on a mass scale? Previous research shows that messages from trusted authorities can be effective in creating attitude change and shifting perceptions of social norms. In this study, we test whether messages from religious leaders – trusted authorities in many communities worldwide – can change minds and shift norms around an issue related to conflict resolution: the reintegration of former members of violent extremist groups. Our study takes place in Maiduguri, Nigeria, the birthplace of the violent extremist group Boko Haram. Participants were randomly assigned to listen to either a placebo radio message or to a treatment message from a religious leader emphasizing the importance of forgiveness, announcing the leader’s forgiveness of repentant fighters, and calling on followers to forgive. Participants were then asked about their attitudes, intended behaviors, and perceptions of social norms surrounding the reintegration of an ex-Boko Haram fighter. The religious leader message significantly increased support for reintegration and willingness to interact with the ex-fighter in social, political, and economic life (8 to 10 percentage points). It also shifted people’s beliefs that others in their community were more supportive of reintegration (6 to 10 percentage points). Our findings suggest that trusted authorities such as religious leaders can be effective messengers for promoting peace.

“Do commodity price shocks cause armed conflict? Evidence from a meta-analysis.” American Political Science Review, 2021. With Darin Christensen and Aaron Rudkin.

PDFCiteAppendicesPreanalysis planReplication

Scholars of the resource curse argue that reliance on primary commodities destabilizes governments: price fluctuations generate windfalls or periods of austerity that provoke or intensify conflict. 350 quantitative studies test this claim, but prominent results point in different directions, making it difficult to discern which results reliably hold across contexts. We conduct a meta-analysis of 46 natural experiments that use difference-in-difference designs to estimate the causal effect of international commodity price changes on armed conflict. We show commodity price changes, on average, do not change conflict risks. However, this overall effect comprises cross-cutting effects by commodity type. In line with theory, we find price increases in labor-intensive agricultural commodities reduce conflict, while increases in the price of oil, a capital-intensive commodity, provoke conflict. We also find that prices changes for lootable artisanal minerals provoke conflict. Our meta-analysis consolidates existing evidence, but also highlights gaps for future research to fill.

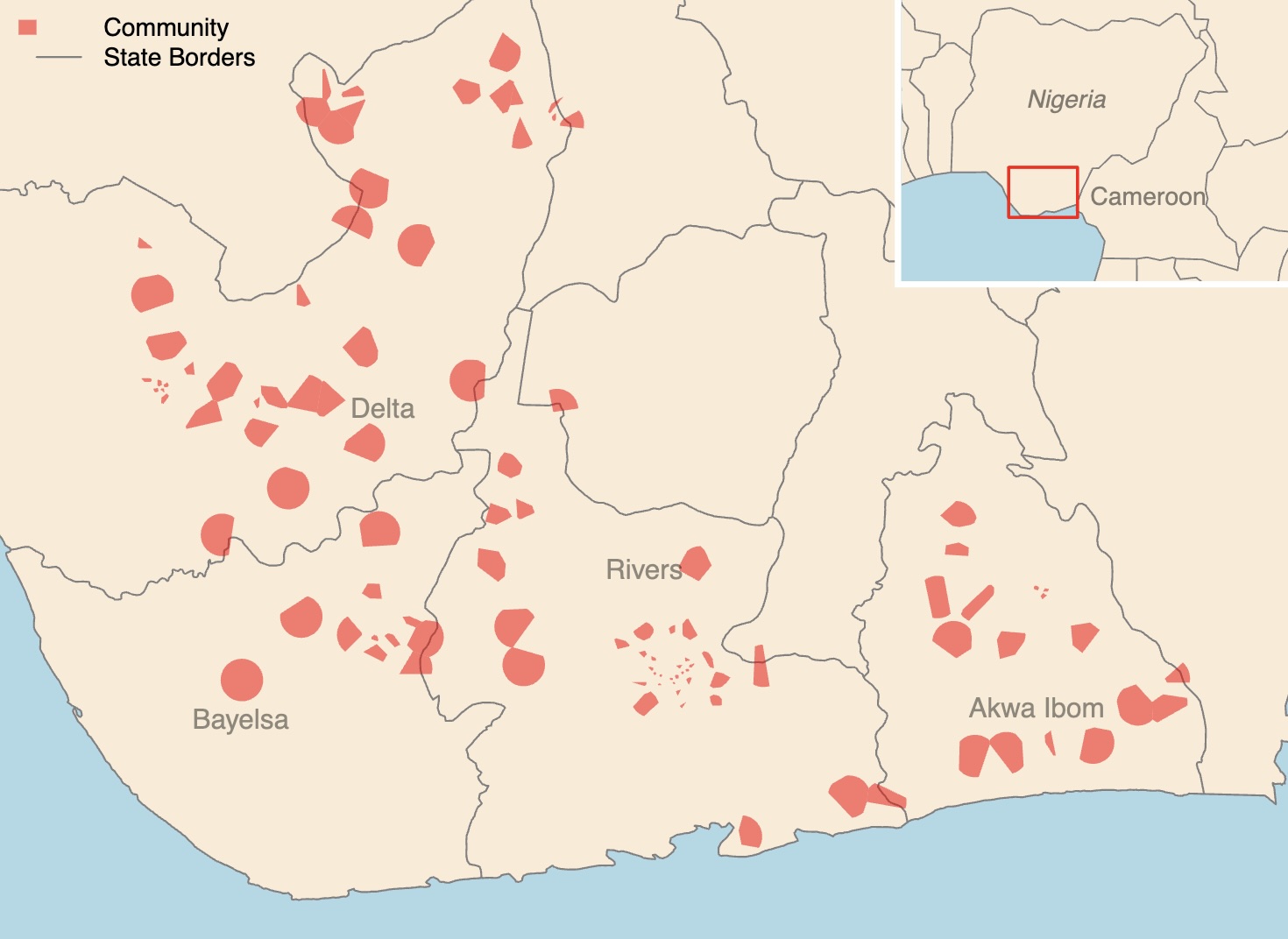

“Motivating the adoption of new community-minded behaviors: An empirical test in Nigeria.” Science Advances, 2019. With Rebecca Littman and Elizabeth Levy Paluck.

PDFCitePolicy briefReplicationPreanalysis planAppendices

Social scientists have long sought to explain why people donate resources for the good of a community. Less experiment in Nigeria, we tested two campaigns that encouraged people to try reporting corruption by text message. Psychological theories about how to shift perceived norms and how to reduce barriers to action drove the design of each campaign. The first, a film featuring actors reporting corruption, and the second, a mass text message reducing the effort required to report, caused a total of 1181 people in 106 communities to text, including 241 people who sent concrete corruption reports. Psychological theories of social norms and behavior change can illuminate the early stages of the evolution of cooperation and collective action, when adoption is still relatively rare.

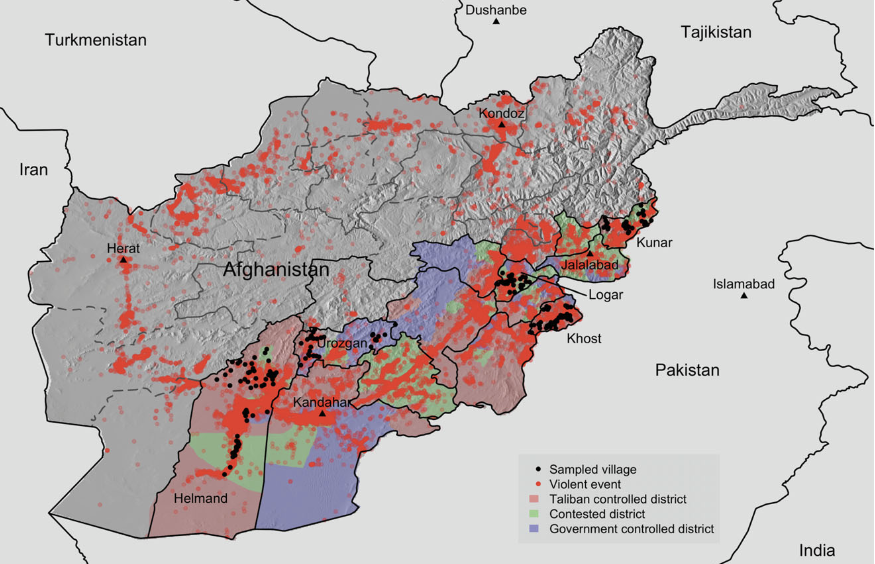

“Explaining support for combatants during wartime: A survey experiment in Afghanistan.” American Political Science Review, 2013. With Jason Lyall and Kosuke Imai.

MPSA Pi Sigma Alpha Best Article Award

How are civilian attitudes toward combatants affected by wartime victimization? Are these effects conditional on which combatant inflicted the harm? We investigate the determinants of wartime civilian attitudes towards combatants using a survey experiment across 204 villages in five Pashtun-dominated provinces of Afghanistan—the heart of the Taliban insurgency. We use endorsement experiments to indirectly elicit truthful answers to sensitive questions about support for different combatants. We demonstrate that civilian attitudes are asymmetric in nature. Harm inflicted by the International Security Assistance Force (ISAF) is met with reduced support for ISAF and increased support for the Taliban, but Taliban-inflicted harm does not translate into greater ISAF support. We combine a multistage sampling design with hierarchical modeling to estimate ISAF and Taliban support at the individual, village, and district levels, permitting a more fine-grained analysis of wartime attitudes than previously possible.

“Poverty and support for militant politics: Evidence from Pakistan.” American Journal of Political Science, 2013. With Christine Fair, Neil Malhotra, and Jacob N. Shapiro.

Policy debates on strategies to end extremist violence frequently cite poverty as a root cause of support for the perpetrating groups. There is little evidence to support this contention, particularly in the Pakistani case. Pakistan’s urban poor are more exposed to the negative externalities of militant violence and may in fact be less supportive of the groups. To test these hypotheses we conducted a 6,000-person, nationally representative survey of Pakistanis that measured affect toward four militant organizations. By applying a novel measurement strategy, we mitigate the item nonresponse and social desirability biases that plagued previous studies due to the sensitive nature of militancy. Contrary to expectations, poor Pakistanis dislike militants more than middle-class citizens. This dislike is strongest among the urban poor, particularly those in violent districts, suggesting that exposure to terrorist attacks reduces support for militants. Long-standing arguments tying support for violent organizations to income may require substantial revision.

Research design and methodology

Book

Research Design in the Social Sciences: Declaration, Diagnosis, and Redesign. Princeton University Press, 2023. With Alexander Coppock and Macartan Humphreys.

Read onlinePressAmazonCiteReplication

APSA Experiments Section Best Book Award

Reviewed in Nature

Assessing the properties of research designs before implementing them can be tricky for even the most seasoned researchers. This book provides a powerful framework—Model, Inquiry, Data Strategy, and Answer Strategy, or MIDA—for describing any empirical research design in the social sciences. MIDA enables you to characterize the key analytic features of observational and experimental designs, qualitative and quantitative designs, and descriptive and causal designs. An accompanying algorithm lets you declare designs in the MIDA framework, diagnose properties such as bias and precision, and redesign features like sampling, assignment, measurement, and estimation procedures. Research Design in the Social Sciences is an essential tool kit for the entire life of a research project, from planning and realization of design to the integration of your results into the scientific literature.

Articles

“Evidence needed for ethical social science.” Science, 2023. With Rebecca Littman, Rebecca Wolfe, and Sarah Ryan.

The history of science is littered with violations of the human rights of research participants. One response has been regulation. In the United States, the Belmont Report lays out ethical principles for human subjects research, which form the foundation of modern-day institutional review boards. According to the Belmont Report, ethics committees and investigators need to determine whether the benefits of the research outweigh the costs and whether the costs unevenly burden particular groups. However, the assumptions underlying such assessments can be flawed. Without evidence about the causal effects of social science research on participant welfare, researchers risk causing unexpected harms or missing unexpected benefits.

“Field experiments in the Global South: Assessing risks, localizing benefits, and addressing positionality.” PS: Political Science & Politics, 2022. With Biz Herman & ...

Biz Herman ⓡ Amma Panin ⓡ Nicholas Owlsley ⓡ Graeme Blair ⓡ Alex Dyzenhaus ⓡ Elizabeth Iams Wellman ⓡ Allison Grossman ⓡ Ken Opalo ⓡ Anisha Singh ⓡ Hannah Alarian ⓡ Lindsey Pruett ⓡ and Yvonne Tan. (Author order randomized.)

In this piece, we draw on our interdisciplinary experiences to develop a set of questions for RCT research in the Global South, suggesting ways to involve scholars and research staff who hail from the study site at every research stage. We maintain these interactions are not one-off exchanges, but rather opportunities to foster meaningful collaboration. We see such efforts as complementary to institutional efforts to recruit and retain graduate students and junior faculty from the Global South. We organize this piece by four distinct, yet interrelated research stages: idea generation, planning, implementation, and dissemination.

“Experiments in multiple contexts.” Handbook of Experimental Political Science, 2021. With Gwyneth McClendon.

In an effort to assess the generalizability of treatment effects across contexts, scholars (or teams of scholars) are increasingly conducting experiments around the same research questions in multiple country and subnational contexts. In this chapter, we categorize recent and ongoing efforts to conduct cross-context experiments into three types: “uncoordinated,” “coordinated, sequential,” and “coordinated, simultaneous.” We discuss some practical trade-offs across these types, arguing that coordinated cross-context designs offer the most promise for meta-analyses. We then draw attention to four areas in which the current approaches arguably all fall short in facilitating cumulative learning about treatment effects and treatment effect heterogeneity across contexts. We conclude by proposing some ways forward to continue improving our approach to learning about generalizability across contexts.

“When to worry about sensitivity bias: A social reference theory and evidence from 30 years of list experiments.” American Political Science Review, 2020. With Alexander Coppock and Margaret Moor.

Eliciting honest answers to sensitive questions is frustrated if subjects withhold the truth for fear that others will judge or punish them. The resulting bias is commonly referred to as social desirability bias, a subset of what we label sensitivity bias. We make three contributions. First, we propose a social reference theory of sensitivity bias to structure expectations about survey responses on sensitive topics. Second, we explore the bias-variance trade-off inherent in the choice between direct and indirect measurement technologies. Third, to estimate the extent of sensitivity bias, we meta-analyze the set of published and unpublished list experiments (a.k.a., the item count technique) conducted to date and compare the results with direct questions. We find that sensitivity biases are typically smaller than 10 percentage points and in some domains are approximately zero.

“Declaring and diagnosing research designs.” American Political Science Review, 2019. With Jasper Cooper, Alexander Coppock, Macartan Humphreys

Researchers need to select high-quality research designs and communicate those designs clearly to readers. Both tasks are difficult. We provide a framework for formally “declaring” the analytically relevant features of a research design in a demonstrably complete manner, with applications to qualitative, quantitative, and mixed methods research. The approach to design declaration we describe requires defining a model of the world (M), an inquiry (I), a data strategy (D), and an answer strategy (A). Declaration of these features in code provides sufficient information for researchers and readers to use Monte Carlo techniques to diagnose properties such as power, bias, accuracy of qualitative causal inferences, and other “diagnosands.” Ex ante declarations can be used to improve designs and facilitate preregistration, analysis, and reconciliation of intended and actual analyses. Ex post declarations are useful for describing, sharing, reanalyzing, and critiquing existing designs. We provide open-source software, DeclareDesign, to implement the proposed approach.

“List experiments with measurement error.” Political Analysis, 2019. With Winston Chou and Kosuke Imai.

Measurement error threatens the validity of survey research, especially when studying sensitive questions. Although list experiments can help discourage deliberate misreporting, they may also suffer from nonstrategic measurement error due to flawed implementation and respondents’ inattention. Such error runs against the assumptions of the standard maximum likelihood regression (MLreg) estimator for list experiments and can result in misleading inferences, especially when the underlying sensitive trait is rare. We address this problem by providing new tools for diagnosing and mitigating measurement error in list experiments. First, we demonstrate that the nonlinear least squares regression (NLSreg) estimator proposed in Imai (2011) is robust to nonstrategic measurement error. Second, we offer a general model misspecification test to gauge the divergence of the MLreg and NLSreg estimates. Third, we show how to model measurement error directly, proposing new estimators that preserve the statistical efficiency of MLreg while improving robustness. Last, we revisit empirical studies shown to exhibit nonstrategic measurement error, and demonstrate that our tools readily diagnose and mitigate the bias. We conclude this article with a number of practical recommendations for applied researchers. The proposed methods are implemented through an open-source software package.

“Design and analysis of the randomized response technique.” Journal of the American Statistical Association, 2015. With Kosuke Imai, Yang-Yang Zhou.

About a half century ago, in 1965, Warner proposed the randomized response method as a survey technique to reduce potential bias due to nonresponse and social desirability when asking questions about sensitive behaviors and beliefs. This method asks respondents to use a randomization device, such as a coin flip, whose outcome is unobserved by the interviewer. By introducing random noise, the method conceals individual responses and protects respondent privacy. While numerous methodological advances have been made, we find surprisingly few applications of this promising survey technique. In this article, we address this gap by (1) reviewing standard designs available to applied researchers, (2) developing various multivariate regression techniques for substantive analyses, (3) proposing power analyses to help improve research designs, (4) presenting new robust designs that are based on less stringent assumptions than those of the standard designs, and (5) making all described methods available through open-source software. We illustrate some of these methods with an original survey about militant groups in Nigeria.

“Comparing and combining list and endorsement experiments: Evidence from Afghanistan.” American Journal of Political Science, 2014. With Kosuke Imai and Jason Lyall.

List and endorsement experiments are becoming increasingly popular among social scientists as indirect survey techniques for sensitive questions. When studying issues such as racial prejudice and support for militant groups, these survey methodologies may improve the validity of measurements by reducing nonresponse and social desirability biases. We develop a statistical test and multivariate regression models for comparing and combining the results from list and endorsement experiments. We demonstrate that when carefully designed and analyzed, the two survey experiments can produce substantively similar empirical findings. Such agreement is shown to be possible even when these experiments are applied to one of the most challenging research environments: contemporary Afghanistan. We find that both experiments uncover similar patterns of support for the International Security Assistance Force (ISAF) among Pashtun respondents. Our findings suggest that multiple measurement strategies can enhance the credibility of empirical conclusions. Open-source software is available for implementing the proposed methods.

“Statistical analysis of list experiments.” Political Analysis, 2012. With Kosuke Imai.

The validity of empirical research often relies upon the accuracy of self-reported behavior and beliefs. Yet eliciting truthful answers in surveys is challenging, especially when studying sensitive issues such as racial prejudice, corruption, and support for militant groups. List experiments have attracted much attention recently as a potential solution to this measurement problem. Many researchers, however, have used a simple difference-in-means estimator, which prevents the efficient examination of multivariate relationships between respondents’ characteristics and their responses to sensitive items. Moreover, no systematic means exists to investigate the role of underlying assumptions. We fill these gaps by developing a set of new statistical methods for list experiments. We identify the commonly invoked assumptions, propose new multivariate regression estimators, and develop methods to detect and adjust for potential violations of key assumptions. For empirical illustration, we analyze list experiments concerning racial prejudice. Open-source software is made available to implement the proposed methodology.

Deportation Data Project

with David Hausman and Amber Qureshi

We collect and post public, anonymized U.S. government immigration enforcement datasets. We use the Freedom of Information Act to gather datasets directly from the government, and we also post datasets that the government has posted proactively or in response to others’ requests.

deportationdata.org

Survey in Afghanistan

With Jason Lyall and Kosuke Imai

Attitudes and behaviors of civilians in wartime Afghanistan related to the Taliban and ISAF coalition forces.

More details-

- Where

- Five provinces of Afghanistan — Helmand, Khost, Kunar, Logar, and Urozgan

-

- When

- 18 January and 3 February 2011

-

- Sampling design

- Multi-stage sample of provinces, districts, villages, households, and adults.

-

- Survey implementer

- Opinion Research Center of Afghanistan (private firm)

Survey in Maiduguri, Nigeria

with Rebecca Littman and Rebecca Wolfe

Survey on attitudes, norms, and behavioral intentions regarding reconciliation with former members of Boko Haram.

Replication data

Survey in Niger Delta, Nigeria

with Rebecca Littman and Elizabeth Levy Paluck

Norm perceptions, attitudes, and behavioral intentions regarding public corruption and corruption reporting of people living in four states in the Niger Delta region in Southeastern Nigeria.

More details-

- Where

- Four states in Nigeria: Awka Ibom, Bayelsa, Delta, and Rivers

-

- When

- February 18, 2014 - May, 11 2014 (endline)

-

- Sampling design

- 106 randomly-selected communities defined by mobile phone coverage areas.

-

- Survey implementer

- TNS-RMS Nigeria (private firm)

Survey in Pakistan

with C. Christine Fair, Neil Malhotra, and Jacob N. Shapiro

Attitudes toward non-state armed groups in Pakistan.

More details-

- Where

- Punjab, Sindh, Khyber Pakhtunkhwa, and Balochistan provinces

-

- When

- April 21, 2009 to May 25, 2009

-

- Sampling design

- Urban-rural-province stratified sample of Pakistan Federal Bureau of Statistics primary sampling units

-

- Survey implementer

- SocioEconomic Development Consultants (private firm)

Research design planning and implementation

Polmeth Statistical Software AwardSIPS Commendation

“DeclareDesign: Declare and Diagnose Research Designs.” R package. With Jasper Cooper, Alexander Coppock, Macartan Humphreys, and Neal Fultz. ~85,000 downloads.

“estimatr: Fast Estimators for Design-Based Inference.” R package. With Jasper Cooper, Alexander Coppock, Macartan Humphreys, and Luke Sonnet. ~220,000 downloads.

“fabricatr: Imagine Your Data Before You Collect It.” R package. With Jasper Cooper, Alexander Coppock, Macartan Humphreys, Aaron Rudkin, and Neal Fultz. ~95,000 downloads.

Analyzing surveys with sensitive questions

“list: Statistical Methods for the Item Count Technique and List Experiment.” R package. With Kosuke Imai. ~180,000 downloads.

“rr: Statistical Methods for the Randomized Response Technique.” R package. With Yang-Yang Zhou and Kosuke Imai. ~65,000 downloads.

Getting started with DeclareDesign

DeclareDesign is a set of software tools to plan, implement, analyze, and communicate about empirical research.

Our book, Research Design in the Social Sciences is the best place to start.

To learn about the approach, read Part I and II. For examples that help apply the approach to many common research designs, read Part III. For ideas on incorporating these ideas into the lifecycle of your project, read Part IV. You can buy the book on Amazon or read it free online.

Start with:

- Read Chapter 2 for a first introduction to the framework.

- Read Chapter 13 for an introduction to the software.

- declaredesign.org includes documentation for each of the software packages including further examples.

Expert work

I provide data analysis for strategic litigation on immigration enforcement in the United States. Below are selected cases I have worked on.

Overcrowding in ICE field office hold rooms

- Expert report, D.N.N. et al. v. Liggins et al., U.S. District Court for the District of Maryland (Opinion, Order for preliminary injunction)

Courthouse arrests

- Declaration, Pablo Sequen v. Albarran, January 2026, U.S. District Court for the Northern District of California

Use of foreign policy deportability grounds (8 U.S.C. §1227(a)(4)(C))

Related article in Slate, "The Bizarre Legal Theory Behind Mahmoud Khalil's Detention." (with Amber Qureshi, Fatma Marouf, and Elora Mukherjee)

- Declaration, Stanford Daily Publishing Corporation et al. v. Rubio et al., October 2025, U.S. District Court for the Northern District of California (with David Hausman)

- Declaration, Mahdawi v. Trump et al., September 2025, U.S. Court of Appeals for the Second Circuit (with David Hausman)

- Declaration, Mahdawi v. Trump et al., April 2025, U.S. District Court for the District of Vermont (with David Hausman)

- Declaration, Chung v. Trump, March 2025, U.S. District Court for the Southern District of New York (with David Hausman)

- Declaration, Khalil v. Joyce et al., March 2025, U.S. District Court for the District of New Jersey (with David Hausman)

Racial profiling in immigration enforcement raids

- Declarations (for motion to certify class and reply in support of motion to certify class), Vasquez Perdomo v. Noem, August 2025, U.S. District Court for the Central District of California

Evidence on torture risk in Convention Against Torture cases

- Brief of amicus curiae, Calderon v. Bondi, July 2025, U.S. Court of Appeals for the Ninth Circuit

Current dissertation students

| Area of specialty | Dissertation title | ||

|---|---|---|---|

|

Rachel Berwald (co-chair) | Comparative politics | Political Misinformation and Identity in Brazil |

|

Daniel Carnahan | Comparative politics | Essays on the political economy of natural resources and climate |

|

Jessica Cobian | Race and ethnic politics | The Emotional Effects of Xenophobic Rhetoric on Latino Immigration Attitudes |

|

Samyu Comandur | Race and ethnic politics | Abortion Politics |

|

Emily Ortiz | Race and ethnic politics | Latino Adults and the (Re)Surging Appeal of Republican Politics in a Racialized and Polarized America |

|

Brigid Morris (chair) | Comparative politics | Popular Opinion and Democratization |

|

Dario Sidhu (co-chair) | Comparative politics | Essays on evidence in policy |

|

Elayne Stecher (co-chair) | Comparative politics | War, Interrupted: How Stress and Exposure to Violence in Conflict Zones Shape Behavior and Attitudes and the Role of Psychosocial Support in Preventing Conflict Recurrence |

|

Carolyn Steinle (co-chair) | Comparative politics | Age-Set or Kinship Based Social Structure in Ethnic Politics |

|

Alfredo Trejo III | International relations | The Contentious Politics of Trade: Public Demonstrations and their Impact on the Free Trade Agreement Negotiation and Ratification Process in Latin America |

Alumni

| Role | Placement (next position) | ||

|---|---|---|---|

|

Jiyoung Kim | Dissertation committee | Assistant Professor of Political Science, Michigan State University |

|

Ryan Baxter-King | Dissertation committee | Assistant Professor of Political Science, University of Nevada, Reno |

|

Ragini Srinivasan | Undergraduate research assistant | Ph.D. student in economics, MIT |

|

Guanzhang Zhao | Undergraduate research assistant | Ph.D. student in economics, Yale |

|

Safa Saleem | Undergraduate research assistant | Legal assistant, law firm |

|

Jihae Hong | Staff researcher | Advisor, Auschwitz Institute for the Prevention of Genocide and Mass Atrocities |

|

Cesar B. Martinez-Alvarez | Dissertation committee | Postdoctoral fellow, Yale Assistant Professor of Political Science, UCSB |

|

Aaron Rudkin | Graduate student researcher | Postdoctoral fellow, MIT |

|

Luke Sonnet | Graduate student researcher | Lead data scientist, GrowthBook |

|

Valerie Wirtschafter | Dissertation committee; graduate student researcher | Data analyst, Brookings Institution |

|

Fatiq Nadeem | Staff researcher | Ph.D. student, UCSB Bren School |

|

Emily Allendorf | Undergraduate research assistant | Quantitative research assistant, RAND Corporation |

|

J. Sebastián Leiva M. | Staff researcher | M.P.P student, Princeton |

|

Jasmine Miller | Staff researcher | Researcher, Give Directly |

|

Min Woo Sun | Undergraduate research assistant | Ph.D. student in biomedical data science, Stanford |

|

Neal Fultz | Staff researcher | Independent data science consultant |

Courses at UCLA

POL SCI 150: Political Violence (undergraduate). Next: Winter 2026. Syllabus

POL SCI 170A: Randomized Trials for Social Change (undergraduate). Syllabus

POL SCI 200E: Experimental Design for Social Science (Ph.D. seminar). Next: Winter 2026. Syllabus

POL SCI 292B: Research Design (Ph.D. seminar). Syllabus

POL SCI 240a/b: Comparative Politics Field Seminar (Ph.D. seminar). Syllabus

I developed a course taught by a graduate student to introduce first year graduate students to the R statistical programming language:

POL SCI 200X: Statistical programming for social science. (Ph.D. seminar). Syllabus

I regularly teach short courses on experimental design and planning, including on DeclareDesign. I'm happy to share materials.

Graduate advising

I actively advise and collaborate with graduate students in the political science Ph.D. program at UCLA. I'm also always happy to talk to other UCLA Ph.D. students even if I'm not advising you.

Undergraduate researchers

I regularly work with undergraduate student researchers at UCLA. Many other faculty also work with undergraduate researchers. The best way to find out who is to email faculty with common interests and to regularly check the Undergraduate Research Portal and talk with counselors from the Undergraduate Research Center.

Recommendation letters

I'm happy to write letters for current and past students and research assistants. I need your materials two weeks in advance to submit, at a minimum.

Prospective graduate students

You can find information about applying to the UCLA Ph.D. program here.

In preparing your application, I encourage you to read Jessica Calarco's excellent Field Guide to Grad School, the advice from Chris Blattman, Macartan Humphreys, and Josh Kertzer.

Our department, like most in political science, does not admit students to work with specific faculty. Admissions decisions are made by a committee, which I am not currently sitting on. However, you are welcome to mention my name in your personal statement in order to ensure it is sent to me during the admission process.

Following the example of Betsy Paluck, I no longer have personal conversations with prospective students, in order to avoid favoring students who have received advice to connect with faculty or who have connections with my colleagues. If you are admitted, I will be eager to talk about working with you at UCLA.

Bio

Graeme Blair is a professor of political science at UCLA and faculty affiliate in statistics and the California Center for Population Research. Blair is Co-Director of the Deportation Data Project. He studies state violence and how to make social science more credible, ethical, and useful. Blair's book Research Design in the Social Sciences was published in 2023 by Princeton University Press and won the best book award from the American Political Science Association Experiments Section. Blair's second book, Crime, Insecurity, and Community Policing, was published in 2024 by Cambridge University Press in the Studies in Comparative Politics series. His articles are published in journals including Science, Proceedings of the National Academy of Sciences, American Political Science Review, American Journal of Political Science, Journal of Politics, Journal of the American Statistical Association, and Political Analysis. Blair's statistical software, including DeclareDesign, has been downloaded over a million times. He is the recipient of awards including the Leamer-Rosenthal Prize for Open Social Science and the Society for Political Methodology best statistical software award.